Mistakes as agency

- Pavel Chvykov

- Jul 23, 2022

- 4 min read

Updated: Jul 26, 2022

We’re all somehow familiar with the concept that a person's actions can be “too perfect” – to the point of seeming creepy, or mechanical, or even dangerous. Classically this comes from sci-fi portrayals of humanoid robots, but can also just be an experience of watching someone do something so well that we get a strange feeling.

Early in my PhD, I spent a year in a cognitive science research group at MIT thinking about what it is that makes us perceive some things as “alive” or “agent-like,” and other things as “inanimate” or "mechanical." The classic motivation for this comes from this simple video made by researchers back in 1944. When viewers were asked to describe the video, they would talk about a fight, an abduction, a rescue, a love story – but none would say that there were some triangles and a circle moving around on a screen. The lab I worked with ran various experimental studies with young children where they would show them different sorts of behaviors of some simple shapes and checked whether the child viewed these shapes as “objects” or “agents.” I tried to develop and benchmark some simple theory for this.

The hypothesis I was working on was that the key distinguishing factor was the apparent “planning horizon.” Whenever we view some dynamics, we try to understand and infer its causes. We hypothesized that if the behavior could be explained in terms of a very short-term planner, then it would look like an object (e.g., ball rolling down hill), while if long-term planning was required to explain the dynamics, it would look like an agent.

To test this, I looked at a simple toy system: a "point-agent" exploring a utility landscape. The video here shows the motion in a 1D landscape with two local utility optima (negative utility on the y-axis). In this setting, if the short-term planner is to look like the motion of an object, then Newton’s equations need to be recovered as the short-plan limit of some more general control theory. This turns out to be the case if we set the reward function of the planner to try to minimize accelerations and maximize utility, integrated across its planning horizon. As a nice bonus, when the plan is short but not 0, the first correction just looks like an additional damping term, and so still naturally fits into "object-like" paradigm. The first video here shows the resulting motion - which clearly looks like a ball rolling in an energy landscape, with no agent-like characteristics.

To see a difference between an agent that can plan ahead and one that cannot, I initialized its position near a local utility optimum, so that to get to the global optimum it needed to get over a hump. In the second video here, we see what the motion looks like when the agent can plan sufficiently ahead to get over the barrier. While it looks like it's doing everything perfectly to optimize the specified reward and smoothly finds its way to the global optimum, the motion still hardly looks anything agent-like. This seems to invalidate our original hypothesis that agency is about planning ahead.

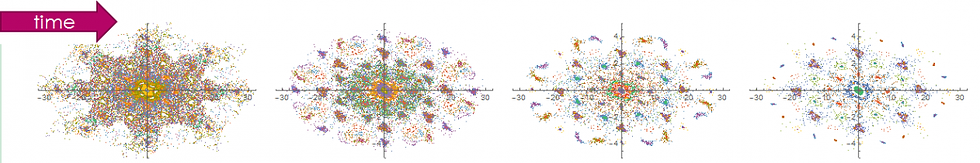

But as I was setting up and running these experiments, I stumbled across a curious effect. As I was doing the reward optimization numerically, it sometimes found some sub-optimal dynamics - a local min of the reward function. This never happened for the short-term planner, as the optimization there was over a very small "plan space" - just the next time-step. But with the larger search space of the long-term planner, this was quite common, especially near bifurcation or decision points, which then made our "agent" sometimes take a few steps in the wrong direction. I noticed that precisely when it made such mistakes, it started looking a lot more agent-like or "alive." The third video here shows two examples of such dynamics.

Sadly I did not have time to develop these preliminary insights much further, and so this work remains unpublished - which is why I'm writing it up here, so that it might see some light of day. But I think these experiments are suggestive of a cool new hypothesis, and one we don't tend to hear about very often: that the difference between whether we perceive something as object-like and mechanical, or agent-like and alive, may be primarily due to the capacity of the latter to make mistakes, be imperfect, fallible, or "only human."

This brings us back to the point at the start of this post - that actions that are too perfect can look eerily inanimate, even when we see a human agent performing them. Indeed, think of the difference between a human ballet dancer, and an ideal robotic ballet dancer: that slight imperfection makes the human somehow relatable for us. E.g., in CGI animation you have to make your characters make some unnecessary movements, each step must be different than any other, etc. We often appreciate hand-crafted art more than perfect machine-generated decorations for the same sort of minute asymmetry that makes it relatable, and thus admirable. In voice recording, you often record the song twice for the L and R channels, rather than just copying (see 'double tracking') - the slight differences make the sound "bigger" and "more alive" - etc, etc.

I find this especially exciting as it could bring new perspective on the narrative we have around mistakes in our society. If this result was established, perhaps we could stop seeing mistakes as something to be shamed and avoided at all costs, and instead see them as an imperative part of being alive - and in fact the main reasons we even assign something agency. This might then also affect not just our perception of shapes on a screen, or of each other, but also of ourselves: We already have some notion that living a perfectly successful routine doing only things we are good at can sometimes lead to a sense of a dull, gray, or mechanical life. On the other hand, more "aliveness" comes when we try or learn new things, push our limits into areas where we aren't perfect and mistakes are abundant.

"Agency" is a subtle and complex concept, which is also somehow so important to us personally and socially. Perhaps we greatly underappreciate the integral role that mistakes play in it?

Comments